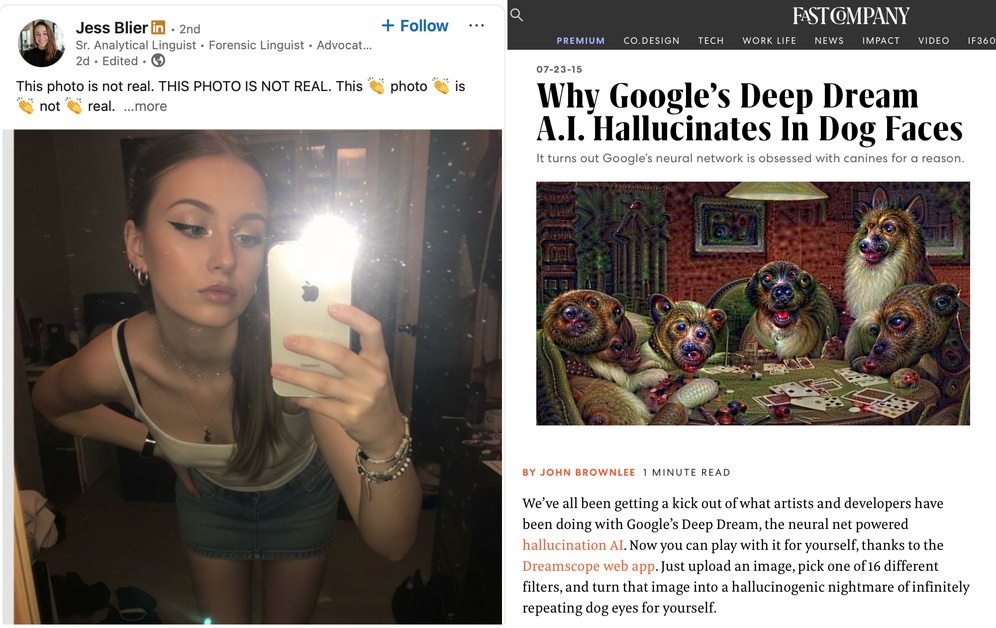

Ten years ago we were mocking image generation algorithms which produced nothing but psychedelic dog faces, now everyone older than 25 is panicking about fake pictures of bad teen bedrooms being used to empathise real kids into being abducted.

WRONG-O. This could be a predator who has synthesized an entirely fabricated persona for the purposes of targeting and grooming children. These fake profiles can be used to lower your child’s guard in order to collect and exploit their personal info:

What if the kid responds to the scammer with a deepfake?

My take: Everybody under ~25 doesn’t really care about this stuff, and shouldn’t. It will come out in the wash.

What everybody over ~25 ought to be doing is investing time in educating kids into the kinds of critical thinking which they will need to survive in a world of plausible lies. This is a challenge we faced with Photoshop, with Television, with Radio, with Airbrushing and Magazines, with Telegrams, Newspapers, Pamphlets, and the Printing Press.

Somehow we’ve always adapted to change.

Just because you’re so old that you don’t have the “this might be fake” filters working for you, doesn’t mean that the next generation will necessarily fall for the deception. Instead: teach the kids. Help them become critical thinkers. Tell them to expect untruth. Show them how to detect falsehoods.

Especially when those falsehoods come from politicians, activists, and other politically vested interests.

References:

- 2015: FastCompany (archived)

- 2025: LinkedIn

The LinkedIn post contains some pretty good general security advice, but I find the premise to be ill-founded.

Postscript

Yes, everyone already did the “…nobody knows you’re a dog” or “…thinks you’re a dog” jokes, 10+ years ago. It’s okay, we’re good for that, but thank you for reading this far anyway.

Leave a Reply