Everything old is new again…

There’s an indefinite series of old nerd jokes about the hardest problems in software engineering; “naming things” and “off-by-one errors” speak to the challenges of writing software, and the impassioned, bikeshedding arguments that you will have when you build something new.

But those problems pale by comparison to what happens when you actually launch the software, and in the select world of security software the way that the world reacts to the existence of your code can be something entirely unexpected.

Or not, as in the case of this article.

The hardest problem that I’ve ever had to deal with in software development is to accept that:

- Bad people exist, and

- Bad people may use my software to their advantage,

…which I have chosen to accept on the basis that: - There are many more good people than bad people, and

- Enabling the good people is a net win.

I first ran into this problem in 1991 when I first released the Crack password cracker – a popular tool of the era, loud echos of which are still found in several popular modern tools – and people argued on contemporary social media:

What is the extent of your liability if one of your programs is used to destroy my system? Do you wave your hands and cry “Not it’s intended use; not it’s intended audience…”? … Shareware and releasing something into the public domain is fine. HOWEVER, in the case of Crack, COPS, et. al., you could have at least ATTEMPTED to see to it that the hackers on the net have a hard time getting to it.

https://groups.google.com/g/alt.security/c/hG__C4I9i78/m/3jeGdJNBO6EJ

The above is just one example amongst many of the genre; other analogies were (to paraphrase) “releasing this software is like throwing a pile of guns into a daycare centre” and “this is like teaching people how to break into cars”, neither of which stand up well to examination of impact or consequences. Then, as now, the mass media lagged behind the conversation by several years, so that more than five years later the Daily Telegraph attempted to stoke a Crack software update as being a novel threat to internet security.

And today? Instead of “hackers may use this” we fret that “{drug-dealers, terrorists, paedophiles, fascists} may use this to communicate with each other!” – citing especially whichever monsters will cause the strongest reaction in our audiences.

So it is with The Verge / Newton’s channel “The Platformer“, where Casey cites his former Verge colleague Gregg Bernstein:

During an all-hands meeting, an employee asked Marlinspike how the company would respond if a member of the Proud Boys or another extremist organization posted a Signal group chat link publicly in an effort to recruit members and coordinate violence. “The response was: if and when people start abusing Signal or doing things that we think are terrible, we’ll say something,” said Bernstein, who was in the meeting, conducted over video chat. “But until something is a reality, Moxie’s position is he’s not going to deal with it.” Bernstein (disclosure: a former colleague of mine at Vox Media), added, “You could see a lot of jaws dropping. That’s not a strategy — that’s just hoping things don’t go bad.”

https://www.platformer.news/p/-the-battle-inside-signal

…and also…

“I think that’s a copout,” he said. “Nobody is saying to change Signal fundamentally. There are little things he could do to stop Signal from becoming a tool for tragic events, while still protecting the integrity of the product for the people who need it the most.”

https://www.platformer.news/p/-the-battle-inside-signal

For a few decades now I have had the quote “Everybody Deserves Good Security” as my strapline – in my Twitter bio, in my email signatures, and as a general theme of life. Other people have begun to adopt it, or have jointly come to the same conclusion. It’s meant as an absolute statement, borne out of the pragmatic observation that there is no actual Santa Claus list of naughty versus nice, nor that it is possible to somehow restrict access to code – restrict access to speech – such that only good people can or will use it.

Sidebar: Yes, it’s horrific, isn’t it? I am entirely content to know that some subset of people with whom I disagree – even whom I actually abhor – might use my code to do “bad things”. I am content because I believe that the responsibility for their badness lies with them, and because my software positively helps many, many other people. It’s straightforward utilitarianism.

I feel that it was sad but inevitable that Signal – with its counterculture nature and ethos – would eventually attract the kind of employee who would want to “gatekeep” access to Signal itself. You can see blatant evidence for that in that final paragraph:

“while still protecting the integrity of the product for the people who need it the most”

— yes, but who would determine that need, and how would you police them against abusing their powers? This is not a simple “yes, but” question.

This is the “Santa Claus Problem” (SCP) – that any ex-ante analysis of the capabilities which software gives to people, will inevitably focus on the potential harms that some subset of the population might cause; and that any proposed solution to address that risk will demand either partitioning the users into “naughty versus nice”, or else will demand weakening the content or value of the tool; and they will tend to ignore the benefits.

There are strong human impulses at play in the SCP; that some tools are “too powerful for ordinary people!” or that “we need to restrict use of this tool to people who will use it for good!“. These impulses are innate for us as human beings, not least because this is how we protect our children.

We shouldn’t let children play with tools that they can’t cope with.

But – harking back to “releasing this software is like throwing a pile of guns into a daycare centre” – most people are not children, those who are children will eventually grow to be adults, speech is not a gun, software is not a gun, and harming people with either of those two generally requires the actual intercession of an idiot human being.

So my solution to the SCP is one of maximum utility: that technologies which enable the most people with the best tools and the freest speech are “good”, and those which hamper or partition access to the same, are bad.

Platforms which treat all content equally and neutrally are the gold standard in such a model. If you ever supported “net neutrality” please ask yourself how you could also be in favour of watering down the privacy value proposition of Signal for everyone, just in case some fascists, insurrectionists, or whatnot are using it?

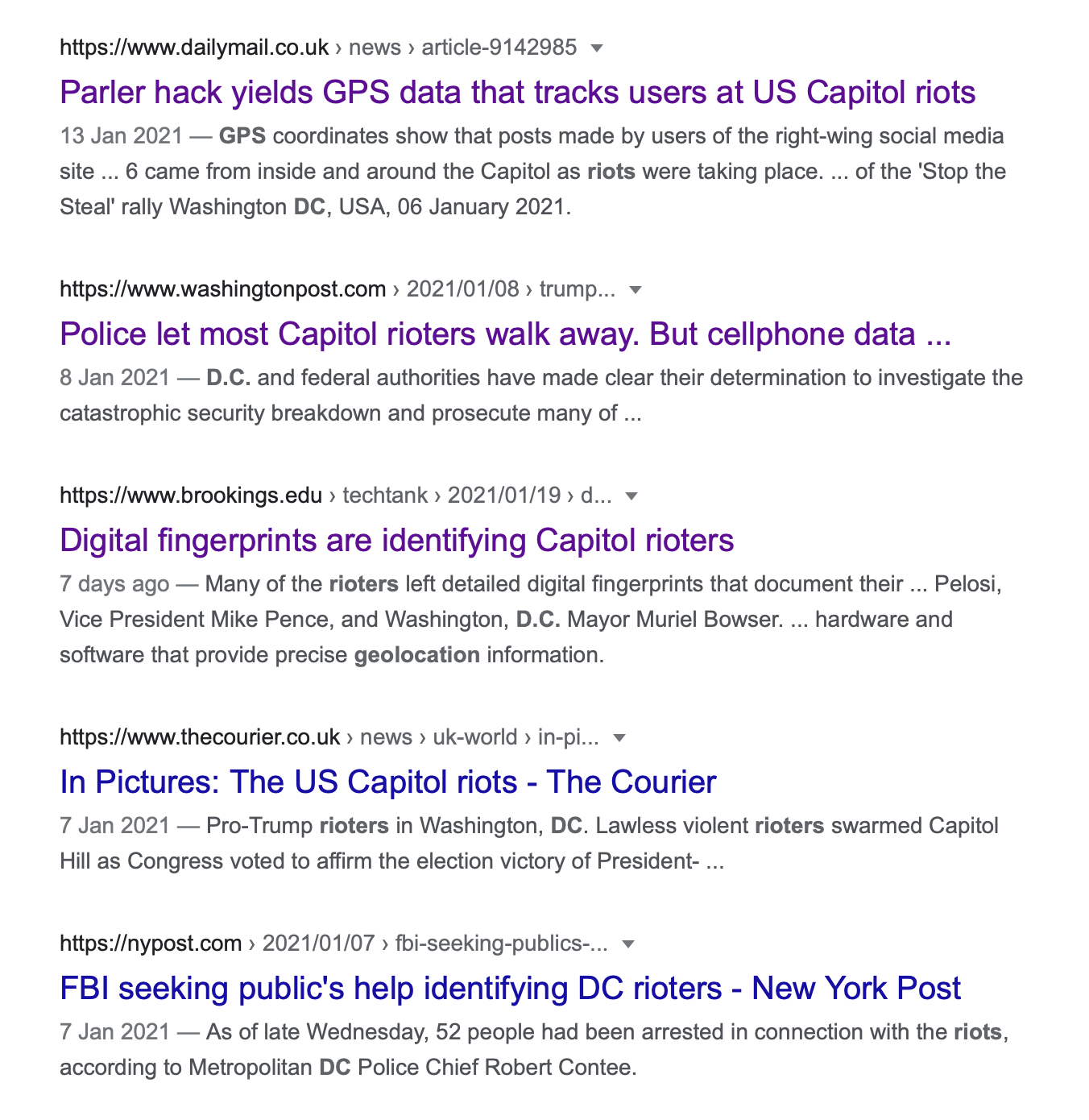

If you’re worried about their criminality, if you fear that they’re doing something illegal, there will generally be several other ways to nail them, along with a load of pre-existing and desirable “due process”:

So this is where I also feel and fear, that Moxie is making a noose to hang himself, and perhaps Signal too, by not embracing the reality. Reactions like:

…if and when people start abusing Signal or doing things that we think are terrible, we’ll say something…

…enable the mindset inside (and outside) of the Signal Foundation that some manner of “strategy” might eventually be forthcoming to address the Santa Claus Problem, e.g.: “to fight Fascists!”.

You can see evidence for this in the article (“That’s not a strategy — that’s just hoping things don’t go bad”) and also on Twitter:

But with some 30 years of this precise argument behind me, I can only say this:

- I don’t believe that there is any good “solution” for the Santa Claus Problem.

- All proposed solutions enable worse inequities such as “censorship”.

- Failure to publicly embrace (and deliver) utilitarianism early enough will later lead to more and possibly overwhelming criticism, but…

- It’s never too soon to start.

Leave a Reply